As I slowly but surely work through catching up on lectures and revision, one of the questions I’ve found myself sitting with is this:

What’s the value of studying mathematics in a world where GenAI can already solve so many of the problems we’re asked to work through? And what does this mean for exams?

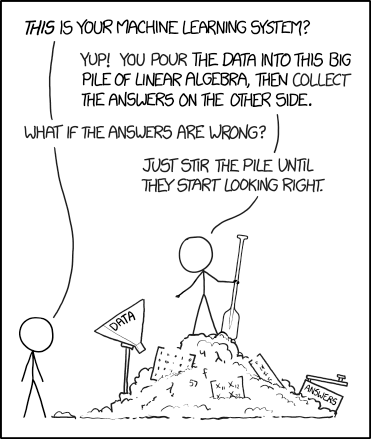

I don’t think that’s a silly question to ask because a good chunk of my time is spent doing things that look increasingly automatable. Solving a simple ODE. Diagonalising a matrix. Writing some Python code to optimise a problem. GenAI can already do a surprising amount of that, and often very efficiently.1

So if answers are becoming easier to generate, what actually remains valuable?

Beyond Getting Answers

I think the first thing worth saying is that mathematics has never really been about just producing answers. AI is very impressive when the problem is already clearly stated. But real problems rarely appear like that. With difficult concepts, the hardest part is often not solving the problem, but figuring out what the problem even is. What can be assumed? What matters? What can be ignored? What’s the right model? What would count as a sensible answer?

An answer can look elegant and still be completely incorrect. A proof can seem plausible while relying on something unjustified. A model can produce clean results while being built on assumptions that do not fit reality at all. That instinct, that ability to question and test and challenge what is in front of you matters enormously.

Mathematics teaches you not just how to solve structured problems, but how to think clearly in situations where the structure is not obvious yet. How to reason carefully, how to tolerate uncertainty, and how to build something coherent out of confusion. And in a world where answers are increasingly easy to generate, I think those skills become more valuable, not less. That kind of judgment feels much harder to automate, and I think it’s one of the most underrated mathematical skills.

I had the pleasure of attending James Maynard’s lecture yesterday at the Royal Institution on Sophie Germain and prime numbers2, and one of the things that really stayed with me was the beauty of the ideas people come up with. It’s funny how some of the most interesting mathematical thinking comes not from immediately being right, but sometimes making a mistake that reveals something more about a problem than the ‘correct’ path would have done.

There is value in getting things wrong if the wrongness is thoughtful. There is value in being playful enough to ask ‘what happens if I try this?’ even when you are not at all sure it will lead anywhere useful. And I think it’s an amazing human thing to have a strange idea, chase it for a while, and discover that even the failed attempt changed the way you see the problem. That to me feels like part of the value of mathematics too.

Exams and Assessments

I’ve also been thinking a bit about what this means for exams, because this isn’t the first time technology has changed how we do and assess mathematics.

Calculators changed what students were expected to do by hand. Log tables became obsolete, certain forms of tedious arithmetic stopped being central, and formula books shifted the emphasis away from memorising everything and more towards applying ideas. And that was a good thing. It allowed assessment to move, at least a little, away from raw computation and towards interpretation and understanding.

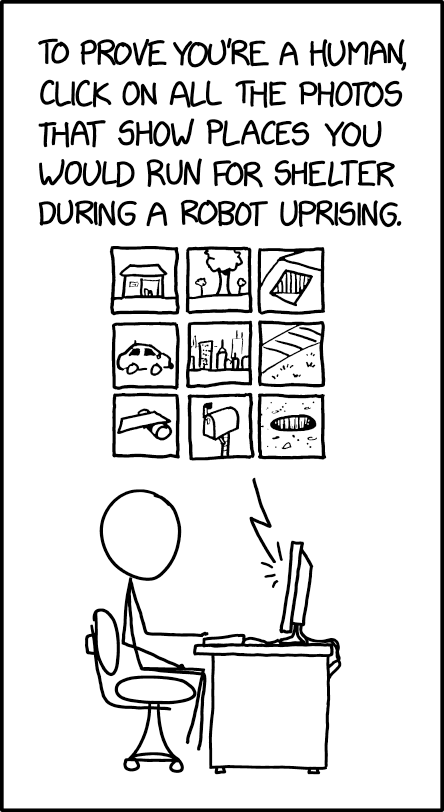

So if AI is now changing what it means to ‘do’ mathematics, then I don’t think assessments in their current form are fully doing justice to what mathematical understanding actually looks like.

I’ve never been fond of exams, but I’ll admit they still reward some genuinely useful things: speed, familiarity, pattern recognition, and the ability to perform under pressure in a silent room with no external help. Fluency, confidence and being able to think under pressure are all skills that matter.

But I also think there is a difference between what’s easy to assess and what’s actually worth assessing.

If AI can generate solutions, code, explanations, derivations, and even plausible looking proofs, then surely the response should not just be to force students into more exam conditions to prove they can still do everything without it.

I don’t think the goal should be to design assessments where AI is useless. That’s a losing game, because the tools will keep improving. A better goal would be to design assessments where using AI badly is useless, and using it well still requires genuine understanding.3

That is obviously much easier said than done. But if we keep designing assessments around what AI is getting better and better at doing, then we might risk measuring the wrong thing altogether. And that feels especially wrong in maths of all subjects.

Mathematics is supposed to be one of the deepest training grounds for reasoning and critical thinking and it would be a shame if we reduced all of that to who can most efficiently survive ridiculous performative exercises under exam conditions.

- For the purpose of this post, I’m setting aside the environmental cost of LLMs. That’s an issue for another rant. ↩︎

- A recording of the talk can be found on the Oxford Mathematics YouTube channel. ↩︎

- I’m not claiming to have a perfect blueprint for what that looks like in practice, but I do think that’s a far better question than just ‘how do we stop students using it?’ It might mean placing more emphasis on interpretation, critique, modelling, and explanation rather than just answer generation ↩︎